What it is

A single-pane workspace built around the legal matter as the unit. Intake, matter setup, research, drafting, review, collaboration, billing, and archive all run through the same context object — the matter — rather than living in five vendors that pretend to be integrated. The product question isn't "can AI draft contracts?" — it's "can a mid-market firm replace four or five vendors with one workspace, and have the work-quality go up rather than down?"

The prototype walks the three screens that carry most of a fee-earner's day: the dashboard (matter portfolio, deadlines, AI alerts), the research panel (matter-scoped retrieval with verified citations and a confidence score), and the drafting editor (a Word-style document with an ambient AI sidebar that flags missing clauses, style deviations, precedent matches, and cross-reference errors).

Three screens, one matter context

The dashboard is the entry point. Active matters — Meridian Holdings SHA, Oakridge Lease Review, Davies v TechCorp ET claim — are tiles with state, lead, partner and next deadline on the face. Above them, an AI Alerts strip surfaces the work the system did overnight: a new enquiry from Meridian flagged as a potential conflict against an existing matter (counterparty overlap), an exchange deadline two days out with no draft in the matter folder. The dashboard isn't a passive list — it's a queue the agent has already triaged.

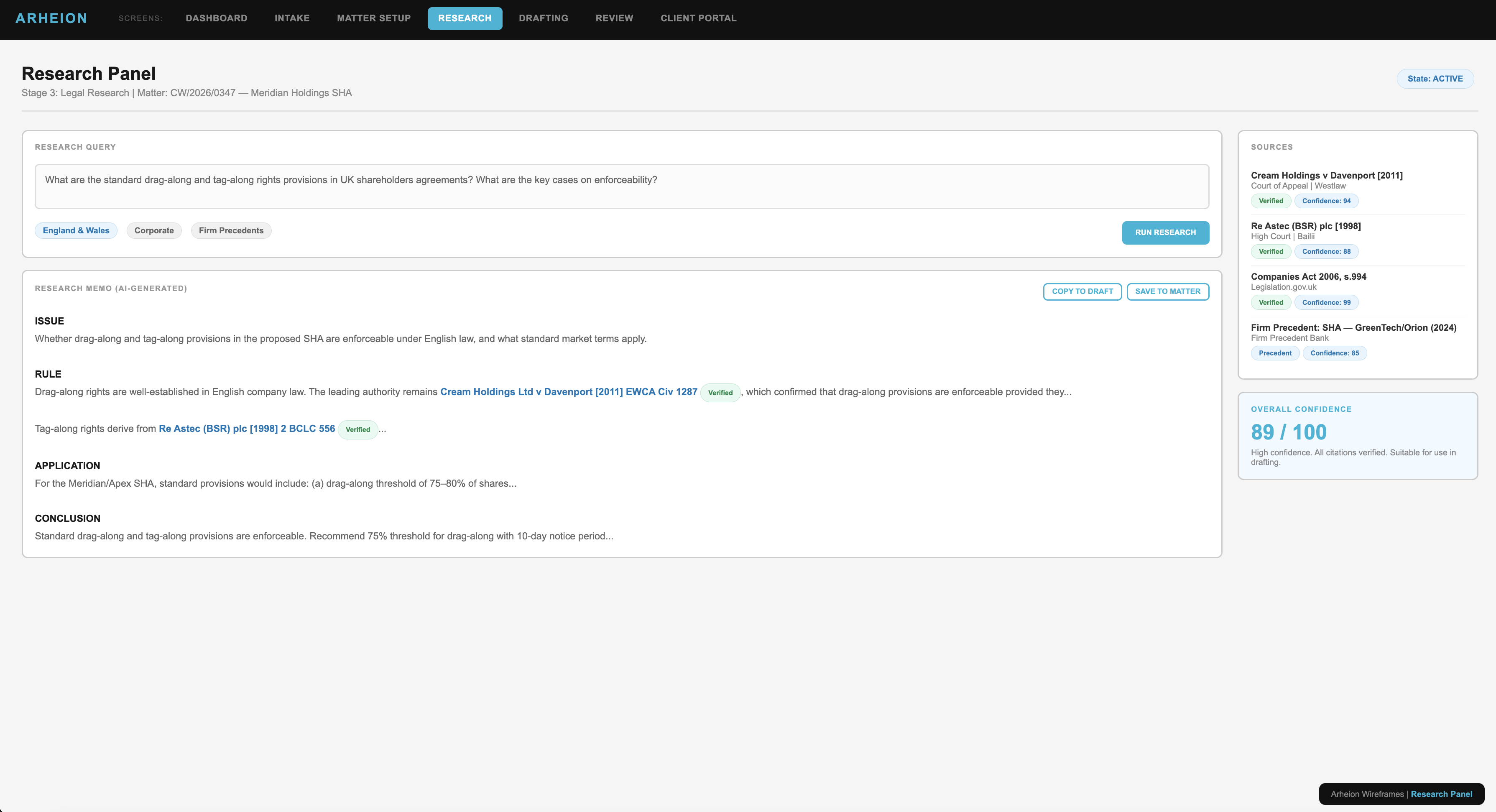

Open a matter and the research panel inherits the context. A query about drag-along and tag-along enforceability under English law runs against three planes — the firm's own corpus (matter files, prior memoranda, transcripts), the public corpus (case law, statute, regulator guidance) and the live matter context (intake, decisions, task state). Every authority returned carries a verified flag and a per-source confidence score, and the panel reports an overall confidence number for the memo it generates. 89/100 means high confidence, all citations verified, suitable for use in drafting. Anything lower asks for a broader search or a partner read.

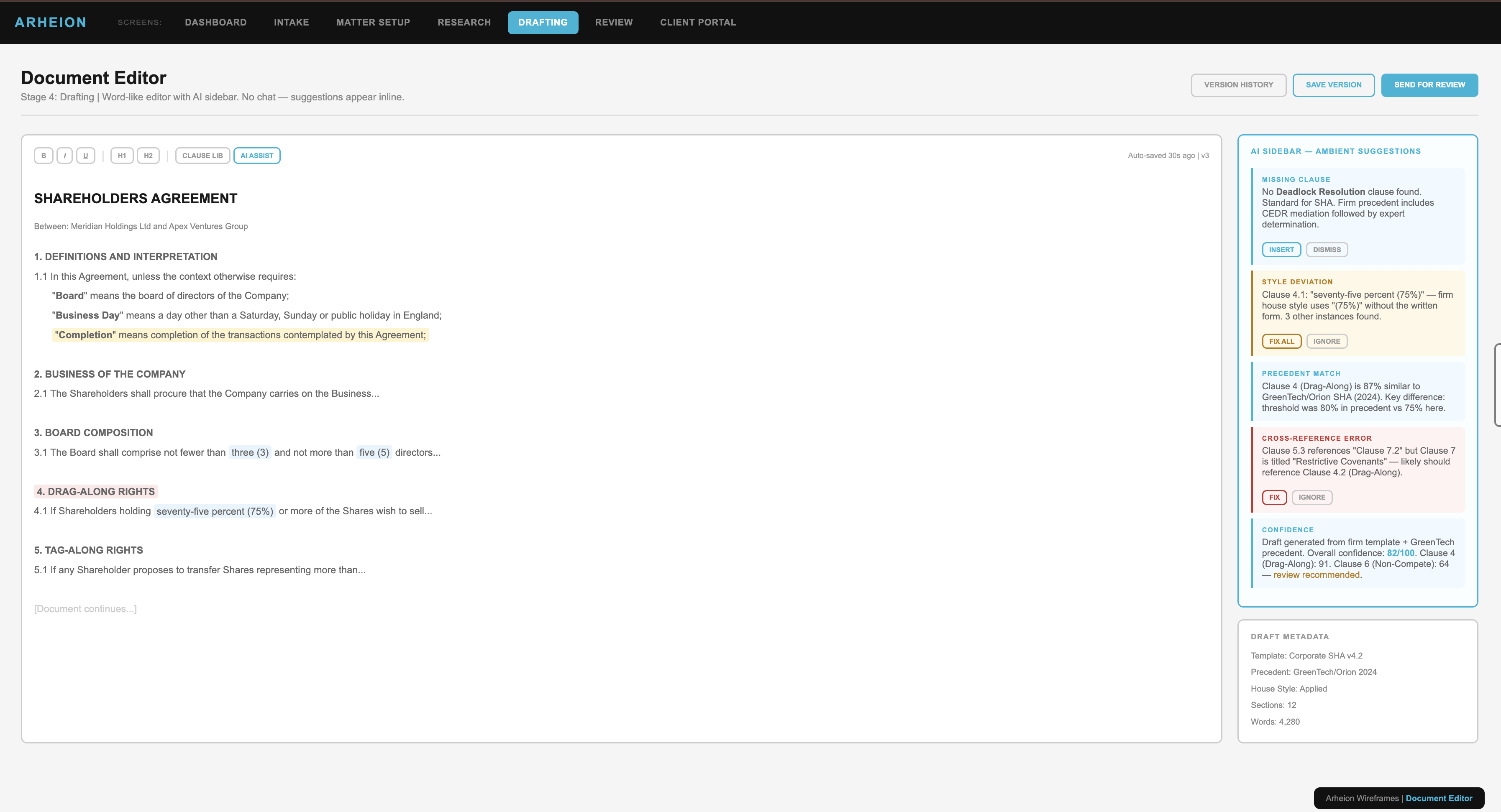

From there, drafting. The editor is intentionally Word-shaped — the muscle memory is non-negotiable — but the right rail runs an ambient agent that watches the document and surfaces typed suggestions: missing clause (no Deadlock Resolution found, standard for SHA), style deviation (firm house style uses "(75%)" without the written form, three other instances detected), precedent match (Clause 4 is 87% similar to a recent firm precedent, key difference is the threshold), cross-reference error (Clause 5.3 references Clause 7.2 but Clause 7 is titled "Restrictive Covenants" — likely should reference 4.2). Each one carries an Insert / Fix / Dismiss control, and a draft-level confidence score sits in the corner so the partner reviewing on Monday morning has a number to interrogate.

Agent + automation, where it actually pays off

The category here isn't "AI for law" in the broad consumer sense. It's two specific shapes: an agent that holds matter context across stages and acts on its own initiative (conflict checks, deadline watch, citation verification, draft validation), and automation that removes the manual passes — copy-paste between research and draft, re-typing of clauses, hand-checking of cross-references, hand-rolled style enforcement.

That's deliberately a narrower claim than "AI lawyer". The agent doesn't argue the case; it does the supporting work the firm currently absorbs as overhead. The automation doesn't replace the partner read; it makes sure what reaches the partner is internally consistent before the read starts. The fee-earner's job — judgment, advice, client relationship — is exactly where the system steps back.

Confidence as the contract with the human

Every retrieval, every generated suggestion, every draft section carries a confidence band, and the band is what decides who acts: 90–100 auto-applies, 70–89 is surfaced as a suggestion the solicitor accepts or edits, 50–69 shows in amber and asks a partner-level reviewer, and anything below 50 surfaces in red with the question "do you want to broaden the search?"

It's the same human-in-the-loop pattern as the order-management agent on this site, but the threshold is materially stricter — the cost of a wrong answer in a legal matter is a regulator letter, not a returned shipment. HITL isn't an after-the-fact safety net; it's the band the system was designed around from the first prompt.

Why mid-market law, specifically

The largest firms have the budget and the in-house data-science teams to wire bespoke pipelines into Westlaw, iManage, Aderant and Intapp. Solo practitioners can live in a clever search box. The firms in the middle — twenty to two hundred lawyers — are the ones quietly paying enterprise prices for a fragmented stack and getting the worst of both worlds: locked-in licensing, partial integration, no answer-grounding across tools, and a junior associate's worth of overhead spent reconciling the gaps.

Legal-stack is sized for that tier. One workspace, one matter context, EU data residency by default (Ireland), and a permissions model that already knows what a Trainee, Solicitor, Partner, Client and Admin are allowed to see — so the answer to "can we replace four vendors with one stack" doesn't have to be re-litigated by IT every quarter.

Stack

Built on Lovable as a single-page prototype, with the agent + retrieval layer mocked behind clean interfaces so the UX — matter cards, AI alert strip, citation chips, ambient draft sidebar, confidence bands — can be argued for in a partner-room demo before the indexing and integration pipelines are wired to real systems.